做網站用ssm還是ssh不錯寧波seo公司

源代碼來自于網絡

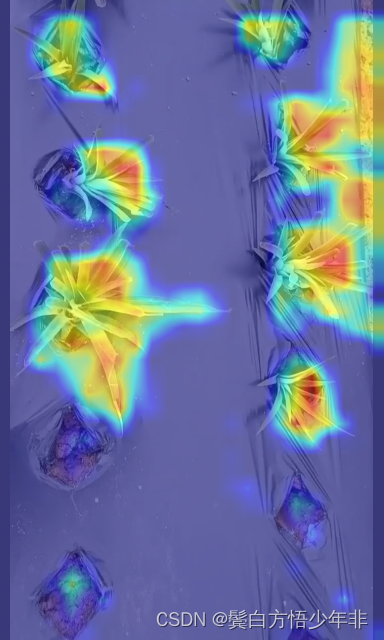

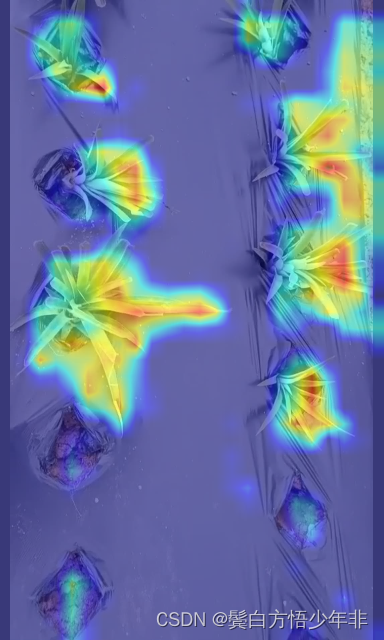

使用pytorch_grad_cam,對特定圖片生成熱力圖結果。

安裝熱力圖工具

pip install pytorch_grad_cam

pip install grad-cam

# get_params中的參數(shù):

# weight:

# 模型權重文件,代碼默認是yolov8m.pt

# cfg:

# 模型文件,代碼默認是yolov8m.yaml,需要注意的是需要跟weight中的預訓練文件的配置是一樣的,不然會報錯

# device:

# 選擇使用GPU還是CPU

# method:

# 選擇grad-cam方法,默認是GradCAM,這里是提供了幾種,可能對效果有點不一樣,大家大膽嘗試。

# layer::

# 選擇需要可視化的層數(shù),只需要修改數(shù)字即可,比如想用第9層,也就是model.model[9]。

# backward_type:

# 反向傳播的方式,可以是以conf的loss傳播,也可以class的loss傳播,一般選用all,效果比較好一點。

# conf_threshold:

# 置信度,默認是0.6。

# ratio:

# 默認是0.02,就是用來篩選置信度高的結果,低的就舍棄,0.02則是篩選置信度最高的前2%的圖像來進行熱力圖。import warningswarnings.filterwarnings('ignore')

warnings.simplefilter('ignore')

import torch, cv2, os, shutil

import numpy as npnp.random.seed(0)

import matplotlib.pyplot as plt

from tqdm import trange

from PIL import Image

from ultralytics.nn.tasks import DetectionModel as Model

from ultralytics.utils.torch_utils import intersect_dicts

from ultralytics.utils.ops import xywh2xyxy

from pytorch_grad_cam import GradCAMPlusPlus, GradCAM, XGradCAM

from pytorch_grad_cam.utils.image import show_cam_on_image

from pytorch_grad_cam.activations_and_gradients import ActivationsAndGradientsdef letterbox(im, new_shape=(640, 640), color=(114, 114, 114), auto=True, scaleFill=False, scaleup=True, stride=32):# Resize and pad image while meeting stride-multiple constraintsshape = im.shape[:2] # current shape [height, width]if isinstance(new_shape, int):new_shape = (new_shape, new_shape)# Scale ratio (new / old)r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])if not scaleup: # only scale down, do not scale up (for better val mAP)r = min(r, 1.0)# Compute paddingratio = r, r # width, height ratiosnew_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1] # wh paddingif auto: # minimum rectangledw, dh = np.mod(dw, stride), np.mod(dh, stride) # wh paddingelif scaleFill: # stretchdw, dh = 0.0, 0.0new_unpad = (new_shape[1], new_shape[0])ratio = new_shape[1] / shape[1], new_shape[0] / shape[0] # width, height ratiosdw /= 2 # divide padding into 2 sidesdh /= 2if shape[::-1] != new_unpad: # resizeim = cv2.resize(im, new_unpad, interpolation=cv2.INTER_LINEAR)top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))left, right = int(round(dw - 0.1)), int(round(dw + 0.1))im = cv2.copyMakeBorder(im, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color) # add borderreturn im, ratio, (dw, dh)class yolov8_heatmap:def __init__(self, weight, cfg, device, method, layer, backward_type, conf_threshold, ratio):device = torch.device(device)ckpt = torch.load(weight)model_names = ckpt['model'].namescsd = ckpt['model'].float().state_dict() # checkpoint state_dict as FP32model = Model(cfg, ch=3, nc=len(model_names)).to(device)csd = intersect_dicts(csd, model.state_dict(), exclude=['anchor']) # intersectmodel.load_state_dict(csd, strict=False) # loadmodel.eval()print(f'Transferred {len(csd)}/{len(model.state_dict())} items')target_layers = [eval(layer)]method = eval(method)colors = np.random.uniform(0, 255, size=(len(model_names), 3)).astype(np.int32)self.__dict__.update(locals())def post_process(self, result):logits_ = result[:, 4:]boxes_ = result[:, :4]sorted, indices = torch.sort(logits_.max(1)[0], descending=True)return torch.transpose(logits_[0], dim0=0, dim1=1)[indices[0]], torch.transpose(boxes_[0], dim0=0, dim1=1)[indices[0]], xywh2xyxy(torch.transpose(boxes_[0], dim0=0, dim1=1)[indices[0]]).cpu().detach().numpy()def draw_detections(self, box, color, name, img):xmin, ymin, xmax, ymax = list(map(int, list(box)))cv2.rectangle(img, (xmin, ymin), (xmax, ymax), tuple(int(x) for x in color), 2)cv2.putText(img, str(name), (xmin, ymin - 5), cv2.FONT_HERSHEY_SIMPLEX, 0.8, tuple(int(x) for x in color), 2,lineType=cv2.LINE_AA)return imgdef __call__(self, img_path, save_path):# remove dir if existif os.path.exists(save_path):shutil.rmtree(save_path)# make dir if not existos.makedirs(save_path, exist_ok=True)# img processimg = cv2.imread(img_path)img = letterbox(img)[0]img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)img = np.float32(img) / 255.0tensor = torch.from_numpy(np.transpose(img, axes=[2, 0, 1])).unsqueeze(0).to(self.device)# init ActivationsAndGradientsgrads = ActivationsAndGradients(self.model, self.target_layers, reshape_transform=None)# get ActivationsAndResultresult = grads(tensor)activations = grads.activations[0].cpu().detach().numpy()# postprocess to yolo outputpost_result, pre_post_boxes, post_boxes = self.post_process(result[0])print(post_result.size(0))for i in trange(int(post_result.size(0) * self.ratio)):if float(post_result[i].max()) < self.conf_threshold:breakself.model.zero_grad()# get max probability for this predictionif self.backward_type == 'class' or self.backward_type == 'all':score = post_result[i].max()score.backward(retain_graph=True)if self.backward_type == 'box' or self.backward_type == 'all':for j in range(4):score = pre_post_boxes[i, j]score.backward(retain_graph=True)# process heatmapif self.backward_type == 'class':gradients = grads.gradients[0]elif self.backward_type == 'box':gradients = grads.gradients[0] + grads.gradients[1] + grads.gradients[2] + grads.gradients[3]else:gradients = grads.gradients[0] + grads.gradients[1] + grads.gradients[2] + grads.gradients[3] + \grads.gradients[4]b, k, u, v = gradients.size()weights = self.method.get_cam_weights(self.method, None, None, None, activations,gradients.detach().numpy())weights = weights.reshape((b, k, 1, 1))saliency_map = np.sum(weights * activations, axis=1)saliency_map = np.squeeze(np.maximum(saliency_map, 0))saliency_map = cv2.resize(saliency_map, (tensor.size(3), tensor.size(2)))saliency_map_min, saliency_map_max = saliency_map.min(), saliency_map.max()if (saliency_map_max - saliency_map_min) == 0:continuesaliency_map = (saliency_map - saliency_map_min) / (saliency_map_max - saliency_map_min)# add heatmap and box to imagecam_image = show_cam_on_image(img.copy(), saliency_map, use_rgb=True)cam_image = Image.fromarray(cam_image)cam_image.save(f'{save_path}/{i}.png')def get_params():params = {'weight': './weights/bz-yolov8-aspp-s-100.pt', # 這選擇想要熱力可視化的模型權重路徑'cfg': './ultralytics/cfg/models/cfg2024/YOLOv8-金字塔結構改進/YOLOv8-ASPP.yaml', # 這里選擇與訓練上面模型權重相對應的.yaml文件路徑'device': 'cpu', # 選擇設備,其中0表示0號顯卡。如果使用CPU可視化 # 'device': 'cpu' cuda:0'method': 'GradCAM', # GradCAMPlusPlus, GradCAM, XGradCAM'layer': 'model.model[6]', # 選擇特征層'backward_type': 'all', # class, box, all'conf_threshold': 0.65, # 置信度閾值默認0.65, 可根據(jù)情況調節(jié)'ratio': 0.02 # 取前多少數(shù)據(jù),默認是0.02,可根據(jù)情況調節(jié)}return paramsif __name__ == '__main__':model = yolov8_heatmap(**get_params()) # 初始化model('output_002.jpg', './result') # 第一個參數(shù)是圖片的路徑,第二個參數(shù)是保存路徑,比如是result的話,其會創(chuàng)建一個名字為result的文件夾,如果result文件夾不為空,其會先清空文件夾。